Good morning,

Here’s a question for you. When was the last time you did something to be a good member of your group? It could be any group you are part of.

Did you make a purchase from a brand that your group approves? Did you keep your mouth shut to keep your job? Did you exchange gossip in order to feel close to someone? Did you agree with an opinion from an influencer or publication that your group subscribes to, without thinking carefully about whether or not you actually agree with that opinion?

You get where I’m going, right?

Why people fall for misinformation

Last week, I attended a session on misinformation at the American Psychological Association’s annual conference that confirmed a few things I keep going back to. Here are three takeaways.

Misinformation is a problem of inattention and identity: According to Sander van der Linden, Professor of Social Psychology at Cambridge, there are two schools of thought on why people exchange misinformation. One is simply a problem of inattention; because our cognitive resources are limited, the sheer volume of what we consume makes it easy to get duped. The other is related to identity; people share and believe in fake news because they have deep-rooted identities related to their worldview that lead them to want to distort the interpretation of facts. And in reality, it’s probably both.

The best way to present alternative information to someone set in their beliefs is to figure out how to connect with them. According to Lynn Bufka, Senior Director of Practice Transformation and Quality at APA, it’s hard to change someone’s mind because we tend to seek out information that already confirms what we think and dismiss information that is contrary to what we think. (In the first place, opinions are a combination of what we hear and how it appeals to our emotions.) So it’s critical to try to find a connection (emotional or attitude) with the person to whom you are seeking to present accurate information, in order to do so in a light where they can at least consider it and how it relates to what they already believe, she explained.

Group membership plays a huge role in belief formation: According to Jay Van Bavel, Associate Professor of Psychology and Neural Science at NYU, there are two paths to believing conspiracy theories or other types of misinformation. 1) Those who are already generally skeptical of authority and have a conspiratorial mindset. 2) Those for whom the falsehood lines up with their identity as it relates to their group membership. If they feel their group has been victimized or targeted, or if their group leader is pushing a conspiracy, they will be attracted to it because they want to be a good group member.

An interesting extension of this is thinking about which groups are easily able to update their beliefs when presented with new information that may be contrary to prior information.

To update your beliefs, Van Bavel explained, you need to have a premium on robust, accurate, high quality information. Scientists, for example are trained to update their beliefs when they get new information; it’s a norm of good science.

In other words, it’s a group norm. And most people do not belong to identity groups that put a premium on accurate information (except say, scientists, journalists, lawyers and a few other truth-driven professions).

In fact, it might actually signal your loyalty to a certain political party or perspective to share misinformation, he explained. Or, if you know the truth, you may not want to speak up because you’ll get pushback from the group.

Which took me back to a framework I have been playing with for news consumption for a while, which is needs-based.

(⭐️If you’re a new subscriber, I covered it in #24: introducing a “needs-based” approach to news consumption, a checklist.)

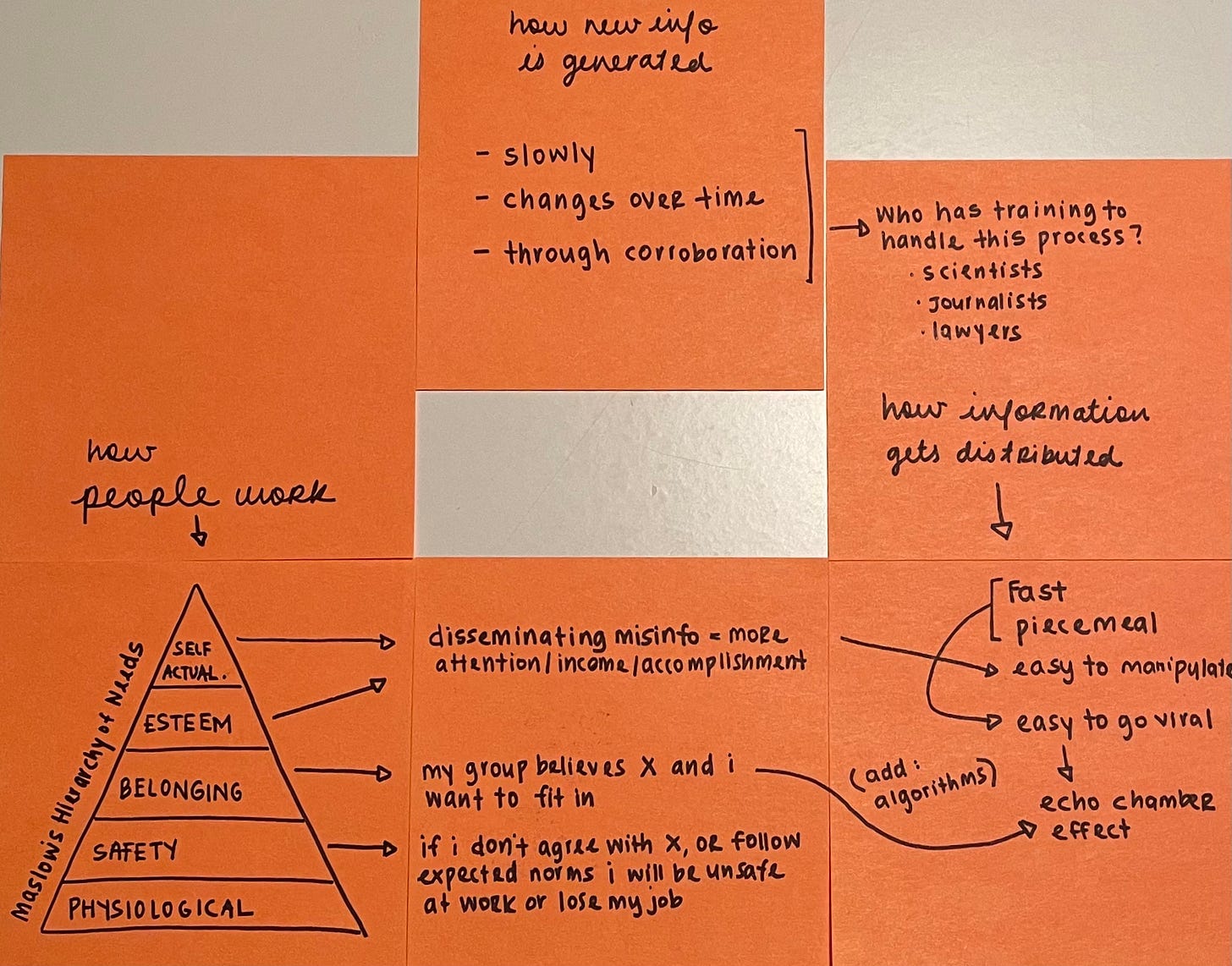

Based on the above, here’s my little post-it brainstorm on examples of needs that drive the exchange of misinformation:

How this plays out for the unvaccinated

Now onto a super important read from The Atlantic from July. It’s a conversation between Ed Yong, science journalist turned Covid-19 news navigator-in-chief for so many of us, and Rhea Boyd, a pediatrician and public health advocate who co-developed a national campaign to organize Black and Latino healthcare workers to provide info (and dispel misinfo) about the vaccines.

The point is that the “unvaccinated” everyone is trying to hate on social media are not a monolith. I highly recommend reading the whole thing, but for now, let’s consider our group-membership theory from above, against this example:

First, let’s set aside the anti-vaxxers:

Boyd: Anti-vaxxers are incredibly vocal, and because of that, they’ve been a disproportionate focus of our vaccine outreach. But I think that they represent a small part of people in this country, and especially in our communities of color, an irrelevant part. In our work, we haven’t given much credence to their bluster. But the rampant disinformation that’s put out by this minority has shaped our public discourse, and has led to this collective vitriol toward the “unvaccinated” as if they are predominantly a group of anti-vaxxers. The people we’re really trying to move are not.

Yong: I’ve never thought of it that way. We’re used to thinking of anti-vaxxers as sowing distrust about vaccines. But you’re arguing that they’ve also successfully sown distrust about unvaccinated people, many of whom are now harder to reach because they’ve been broadly demonized.

For the unvaccinated, there are those that are still lacking access or good information, and step by step that is being addressed through better interventions in appropriate community settings.

And for those who are stuck on some belief and maybe even contentious about it, a key intervention would be to change what their groups are saying:

Boyd: These very contentious encounters are driven by people really staunchly holding on to something that they are served by in some way. Maybe it’s the source that belief came from, and they need to believe other things that source says. Maybe they want camaraderie or collegiality with people around them, so they can feel that they’re in an in-group. People need to believe that what they believe is true. They feel threatened when challenged about something to which they feel beholden. The best way to address that may not be to actually challenge them one-on-one, but to shift what people around them are talking about. If you hear enough stories in your Facebook feed or from strangers in the store that reinforce the science, it’ll make what you’re saying less reasonable to you. And less useful to you. And once you don’t need to hold on to it, you can let it go.

Yong: Which is why community-based efforts are so important. People who will be swayed by Anthony Fauci are already listening to him. But, for example, public-health professionals I spoke with in Missouri are trying to get pastors, firefighters, and community leaders to act as trusted voices for their own people.

Which maps perfectly onto the group-theory and is a really really helpful framework to set aside judgment or anger and think empathetically about how another human being is operating.

For fun, here are my full post-it notes for this letter :)

There are so many factors involved in our current media system that are beyond our control (ie: the whole right-column of post-its), and I see the most hope in grasping the human behavior side of it for ourselves and our loved ones.

Jihii